Introduction

Artificial intelligence (AI) is increasingly being integrated into health care, including but not limited to diagnosis and treatment plans, drug development, prediction of health risks and outcomes, health monitoring, and medical imaging. AI can also automate aspects of health care including data processing and administrative tasks, reimbursement decisions, patient interactions, and clinical decision-making. Additionally, individuals are increasingly using AI for health information and advice.

While there has been an increase in funding for and use of AI in health care in recent years, public opinion on AI’s role in providing accurate health information remains mixed. Further, there are concerns that AI may lead to job losses and reduce personalized human-based interactions. Moreover, AI can exacerbate health disparities if the underlying data on which models are built are biased and/or not inclusive. Alternatively, some suggest that AI may help mitigate disparities if it is carefully designed. This brief examines the implications of the growing use of AI for disparities in health and health care and discusses factors that can help reduce AI-related bias in health care.

Growing Use of AI in Health Care

AI tools are becoming increasingly integrated into various aspects of the health care system. For example, hospitals report using AI or predictive models as both administrative tools to perform tasks such as patient scheduling, billing, and medical coding, and as clinician-facing tools to predict health risks and outcomes among patients. A 2025 survey conducted across 16 states found that eight in ten (84%) health insurers report using AI or machine learning for fraud detection, utilization management, and prior authorization, among other uses. Health systems also report using AI to “limit claim denials and streamline prior authorization processes.”

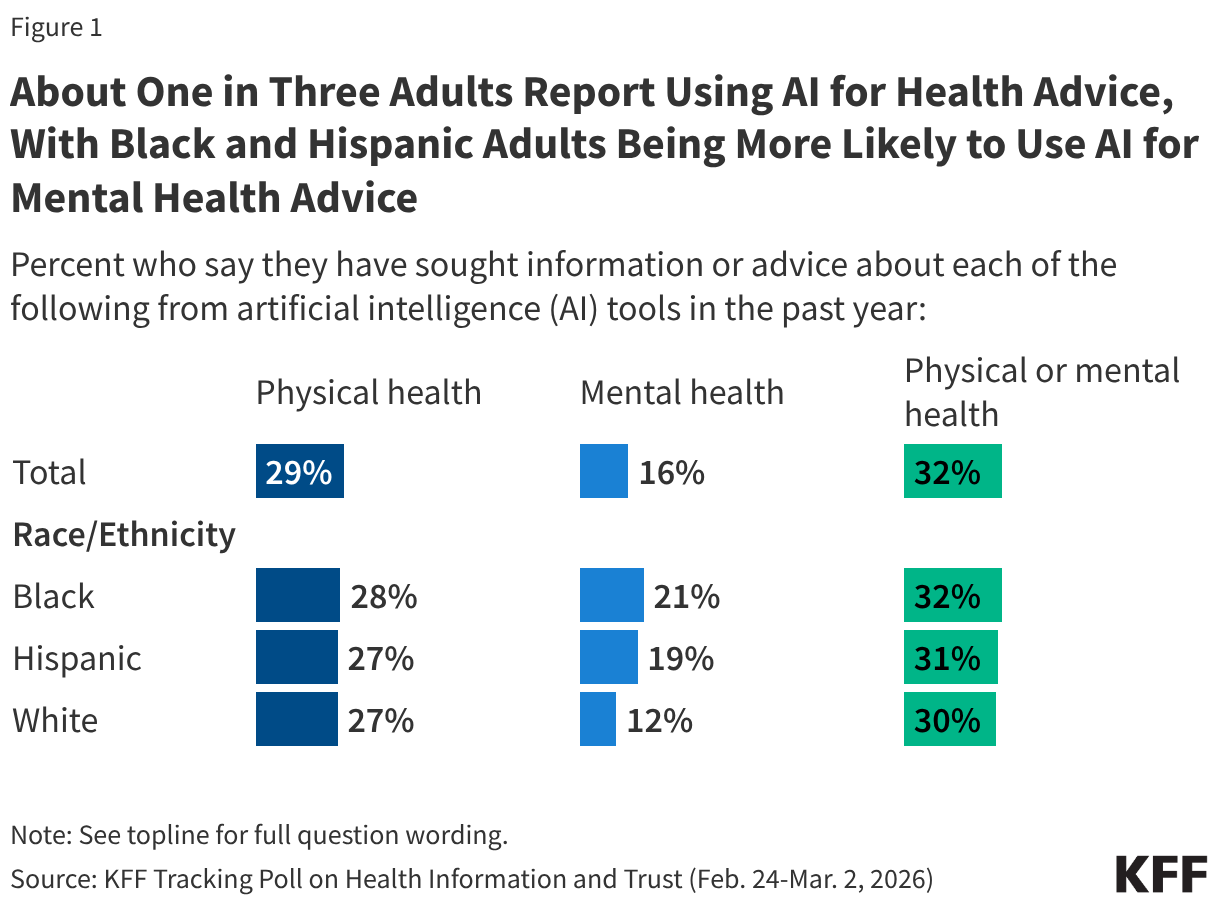

The public also is increasingly using AI for health information and advice although many have limited trust in the reliability of AI tools. According to OpenAI data from 2026, more than 40 million people globally turn to ChatGPT daily for health information. The data also show that AI chatbots are becoming an important source of information for health insurance and billing advice, with users asking between 1.6 and 1.9 million questions per week regarding plan comparisons, claims, billing, and coverage. Further, a 2026 KFF survey finds that about a third (32%) of adults say they use AI chatbots for health information or advice (Figure 1). However, two-thirds (67%) of adults overall say they trust AI tools or chatbots “not too much” or “not at all” to provide reliable health information, and about three in four (77%) say the same regarding information about mental health and emotional well-being. While rates of use for and trust in AI for physical health advice are similar across racial and ethnic groups, Black and Hispanic adults are more likely than their White counterparts to report using AI for mental health advice and Black adults (29%) are somewhat more likely than White adults (20%) to say they trust AI tools or chatbots to provide reliable information about mental health and emotional well-being “a great deal” or “a fair amount.”

Impact of AI on Disparities in Health and Health Care

As the use of AI in health care grows, research suggests that AI models can exacerbate racial and ethnic health disparities. A 2024 systematic review of 30 studies over a ten-year time period (from 2013 to 2023) that assessed instances of racial bias perpetuated by AI and machine learning algorithms in health care found a significant association between AI utilization and an exacerbation of racial disparities in health and health care outcomes. These disparities included longer waiting times for appointments, lower rates of success in predicting mental health outcomes, and underdiagnosis of health conditions, particularly for Black and Hispanic people compared to other groups. For example:

- One study found that a machine learning algorithm used for creating patient appointment schedules led to Black patients experiencing 33% longer wait times than other patients. This was due to the model using socioeconomic indicators such as employment status, zip code, insurance type, and past no-show rates, which are correlated with race, to create appointment schedules.

- Another study found that a widely used algorithm to guide health care decisions assigned Black patients the same level of risk as White patients even though Black patients were sicker. The algorithm used health care costs as an imperfect proxy for illness, since less money is spent on Black patients who have an equivalent level of need due to inequities in access to care. The authors suggest that addressing this disparity would significantly increase the share of Black patients receiving additional care.

- In diagnostics, AI models may underperform on patients with darker skin because training datasets are more likely to collect data from lighter skinned patients.

In the systematic review, the authors identified four primary and interrelated causes for AI-perpetuated disparities including: biased underlying datasets, historical and systemic biases that can be encoded into AI when it is trained on these data, algorithmic design bias, and biased application and/or deployment of AI.

These AI-related racial and ethnic disparities also extend into mental health diagnosis and treatment recommendations. For example, language-based AI models underperformed on predicting depression severity for Black patients as compared to White patients since the two groups use different types of language to express depression symptoms and AI is often primarily trained on language used by White patients given that there is more data available on White patients since they make up a larger share of the population. However, researchers found that even models trained exclusively on the depression-related social media language used by Black individuals performed poorly at predicting depression severity in the group while models trained with the same social media data on White individuals performed well at predicting that group’s depression severity. The authors suggest that this could be due to other factors beyond language, such as paralinguistic features like speech rate or tone, serving as better predictors for depression severity among Black individuals. A separate study found that several AI models made inferior treatment recommendations for Black mental health patients when the patient’s race was explicitly or implicitly mentioned, likely due to biases embedded in the data on which these models are trained. An AI model used for suicide prediction also performed worse for Black patients, with researchers finding that it successfully detected 62% of suicides among White patients but only 10% among Black patients.

Research has found that the use of race in clinical algorithms may also impact the reliability of AI tools for certain groups since they are often trained on these algorithms. AI models are often trained on clinical algorithms used to predict diagnoses and treatments, which in some cases have historically used race as a factor and resulted in worse outcomes for some groups. One of the most well-known examples of this practice is the use of separate measures of kidney function (i.e., estimated glomerular filtration rates, eGFRs) for Black patients compared to non-Black patients, which resulted in many Black patients not receiving a kidney transplant. Another study found that removing the use of race from spirometry, a test used to measure lung function, would increase the number of Black people who would qualify for lung disease diagnosis and disability payments. Further, a 2019 study found that an algorithm used to predict the likelihood of safely having a Vaginal Birth after Cesarean Delivery (VBAC) incorrectly predicted a lower likelihood of success for VBAC for Black and Hispanic women than White women, which led to doctors performing more cesarian deliveries on Black and Hispanic women than White women. A growing number of organizations and health care institutions have recently moved to remove race from these algorithms. However, to the extent AI is trained on algorithms or results from algorithms that use race as a factor, AI could perpetuate these racial biases.

Research also shows that AI models may promote racial and ethnic health misinformation, leading to misdiagnosis or delayed care. A study of multiple AI chatbots found instances of the tools promoting “race-based medicine” and false claims about race such as difference in skin thickness between Black and White patients. Further, all AI chatbots included in the study incorrectly stated that Black men’s and women’s normal lung function tends to be lower than their White counterparts’, reflecting its training on the underlying race-biased algorithm to calculate lung function.

If carefully designed, AI has the potential to help address disparities. For example, AI-driven decision support tools can be used to identify and correct real-time clinician bias, particularly during high-stress periods when “cognitive load” often leads to disparities in documentation and diagnosis. By automating administrative tasks such as scheduling and billing, AI could help reduce staff burnout at safety-net hospitals, which disproportionately treat underserved groups. AI can also be used to identify the social determinants that drive health inequities through the analysis of large amounts of population data, which can then help guide interventions to address disparities. AI can also help identify disparities in health outcomes that might otherwise go unrecognized. For example, in a recent study, researchers used machine learning to identify excess deaths due to COVID-19 that were unrecognized in official mortality reports and found that these unrecognized deaths occurred disproportionately among people of color, those with lower educational attainment, and those with lower household incomes, among other factors.

Careful design and inclusive data collection; a diverse workforce; and a focus on ethical considerations, transparency, and a collaborative approach are factors that may help mitigate AI biases in health care. Identification and mitigation of biases during AI models’ development, as well as continuous monitoring and inclusion of more representative data over time, can help to address AI-related bias in health care. Further, having a diverse and representative data science workforce and training AI developers to recognize biases in algorithm development also play an important role in developing equitable AI models. Developing and enforcing ethical standards for AI in health care that inform how AI models and algorithms will be designed to help reduce bias and discrimination and establishing accountability in the creation and use of those algorithms may also help to reduce algorithmic bias. Further, collaborating with a wide range of stakeholders, such as health care workers, policymakers, community members, and ethicists when developing AI tools can offer a broader and more nuanced understanding of the impact of AI on health disparities.

Researchers and other experts have increased their focus on the creation of frameworks and coalitions to help guide equitable use of AI in health care. In 2023, the Coalition for Health AI released guidance for the implementation of AI tools that centers equity, fairness, and ethics. The guidance includes recommendations on developing a common set of principles to guide the development and use of AI tools and a coalition or advisory board to help ensure equity and facilitate trustworthiness in health-related AI. In early 2024, experts in health, medicine, technology, and policy issued a call for “ongoing dialogue and ethical commitment from all stakeholders” to ensure that AI in health care is inclusive following a series of discussions at the 2023 Responsible AI for Social and Ethical Healthcare (RAISE) international symposium. In 2024, the Council of Medical Specialty Societies and the Doris Duke Foundation created the Encoding Equity alliance, whose aims are to identify the incorrect use of race in clinical algorithms and guidelines, design “accurate and equitable decision tools”, and collect and disseminate evidence on the use of AI in health care to promote health equity.

While there has been increasing activity at the state-level to regulate AI in health care, the Trump administration has prioritized deregulation of AI, reduced or eliminated equity requirements for AI in health care, and is challenging state regulations that impose strict anti-bias requirements. President Trump issued Executive Order (EO) 4148 in January 2025 that rescinded a number of Biden administration EOs, including those related to equitable use of AI in health care. He replaced those EOs with EO 14179, which shifts focus away from “equity” mandates and “algorithmic fairness” and towards “minimally burdensome” requirements to encourage innovation. While numerous states have recently introduced or enacted legislation related to AI in health care, the Trump administration is challenging state laws that impose strict bias audits or transparency requirements for AI via EO 14365 issued in December 2025. Under the EO, the Department of Justice created an AI Litigation Task Force in January 2026 to challenge states with AI laws found to be inconsistent with federal policy. The EO also directs the Secretary of Commerce to restrict federal grant money, specifically the Broadband Equity Access and Deployment (BEAD) Program funds, in states with “onerous” AI laws. For example, Colorado passed the “Consumer Protections for Artificial Intelligence” law in 2024, which among other things, requires health care providers and health insurers to take steps to prevent algorithmic discrimination. However, implementation of the law has been postponed due to legal challenges.